Hazard Identification Software Technology delivers effective Risk Assessment and Control to improve Workplace Safety

AI Driven Hazard Identification in the Workplace

Ever increasing bandwidths and computing power, in particular those supported by recent developments in high performance computing clusters, continue to open new opportunities for a myriad of AI driven software applications as the cost of these compute nodes continually fall. This level of technology is now capable of delivering low cost solutions for a wide range of businesses, as demonstrated by SpillDetect, which provides an extremely aggressive ROI for those looking to improve hazard identification in the workplace.

Furthermore, research has shown that humans have a poor perception of risk (source). A phenomenon that can generate sub-standard hazard identification, risk assessment and control. This in turn can cause potentially disastrous consequences (source) as a result of skewed, or biased decision making (source). Noteworthy is that we are all susceptible to these effects, irrespective of levels of education (source), as they are driven by processes that are hardwired into cognition (source).

As noted within this blog, humans have pretty much a unique ability to ‘see’ and then communicate what they have ‘observed’ to others at a price determined by the labour market. However, although humans may ‘see’, it is not necessarily what they ‘observe’; a result inbuilt cognitive bias or heuristics (source). Therefore, while humans may provide a cost effective warning system, it is one that is fundamentally flawed. So, how can we achieve wholesale improvements in hazard identification through deploying alternative, cost effective technological driven solutions?

Hazard Identification Risk Assessment and Control

SpillDetect provides a vivid example. This ground-breaking technology delivers a low cost, low bandwidth solution that allows those that adopt it to pre-empt and negate risk associated with slips and trips, along with removing the costs of personal injury claims, through providing real-time hazard identification, risk assessment and facilitating control. But how does it achieve this?

Quite simply, SpillDetect replaces human eyes by using cameras through tapping into existing CCTV networks. Not only does this provide superior coverage when comparing the number of cameras typically found within retail stores to the number of staff walking the floor within, it also allows businesses to release latent value from their investment within existing CCTV infrastructure by analysing the huge amount of data captured by them, without the need for any network upheaval.

Furthermore, humans ‘see’ but do not necessarily ‘observe’ (source). A trait common to all, which underpins poor levels of hazard identification typically found within the workplace. However, through using an AI driven application that is programmed to follow strict rules to analyse data captured by CCTV, SpillDetect removes humans from a function in which they typically deliver poor performance; risk assessment and reporting.

In addition, by serving real-time notifications and alerts to humans, it allows them to deploy critical thinking and risk mitigation strategies; areas in which they excel and wherein technology struggles to perform (source). A combination that delivers a cost effective solution with performance characteristics greater than the sum of its parts, through correctly integrating human capital with IT (source), which can prove to be a significant source of competitive advantage (source). It also lets businesses ‘See Everything’ and ‘Know Everything’, so that they can differentiate themselves through becoming the safest place to work and shop.

Hazard Identification Software Technology

So, how do you solve the issue of hazard identification through deploying AI software technology that is built upon existing CCTV networks? Let’s walk through some of the challenges that we faced with the development of our proprietary Spill Detection software.

The first challenge is to understand the areas of interest in what is pretty much unbounded geometric space, which for the hazards that typically drive slips and trips polarise around floor obstructions. In addition, for a product to deliver commercial value it needs to be rapidly deployable at scale, which in turn demands minimal setup/system configuration for potential end users.

The first step is to overcome the problem of having to laboriously enter floor plans to determine areas of interest on a store-by-store basis, a hugely time-consuming process. As you can see from the video at the top of the page, the proprietary patent pending technology that underpins SpillDetect automatically compensates for any kind of floor plan and processes the images to give the application high levels of resilience.

Traditionally, areas of interest are typically defined with respect to a specific camera position. Therefore, if the camera orientation is changed, it will also move the area of interest. For example, if an area of interest was defined in front of an emergency exit and the camera monitoring this zone becomes disturbed by a few degrees through maintenance or cleaning, the area of interest defined within will also move with respect to it. As a result, it may end up monitoring a redundant space in front of a wall. This effect has the potential to render these types of applications unusable in practice, as they not only require complex and lengthy setup but also continual monitoring, adjustment and re-calibration to account for any potential camera disturbances.

However, the proprietary technology developed for SpillDetect provides inbuilt resilience against changes in camera orientation induced through disturbances. A feature demonstrated by the on-page video, wherein the camera position is moved at around 0:51s. These side benefits also open up new avenues for our technology, in particular for hazard identification in the work place, to improve risk assessment and control.

Noteworthy is that to identify hazards through using existing solutions requires a costly mix of different sensor types. For example, LiDAR will give depth perception but is unable to provide texture measurements, which requires a camera (source). Not only does this lead to using a mix of expensive sensor types to achieve full completeness of depth and texture perception, it also necessitates ‘sensor fusion’, which is one of the main challenges facing related AI driven software applications.

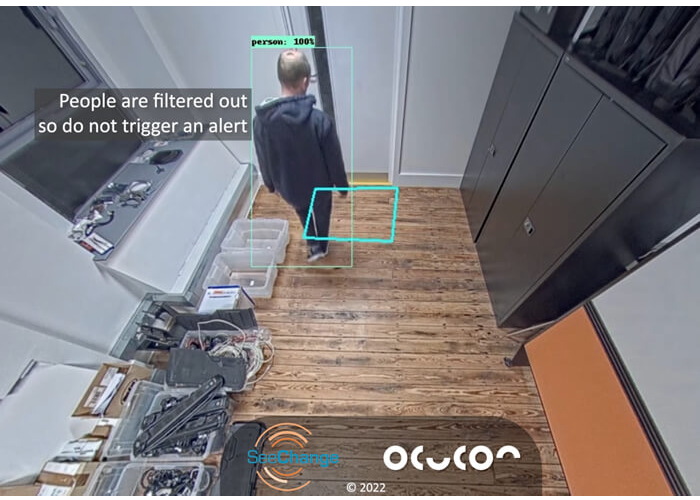

However, our underlying technology can, through comparing frames captured from standard CCTV cameras, recognise when an area of it that was previously ‘visible’ becomes obscured. The underlying model is also highly sophisticated and understands human movement, which it ignores as illustrated at around 16 seconds into the attached clip. As soon as an object is placed within the area of interest it is detected, as illustrated by the change in colour to red for the object and brown for area of interest at around 29 seconds.

For any defined area of interest, the technology provides inbuilt resilience to changes in camera orientation that may be induced through maintenance or cleaning cycles. The latter is demonstrated at around 0:51s – note how the area of interest remains fixed and does not move relative to the change in camera orientation. By applying a set of business rules programmed into the decision engine, it can assess the period of time that an area of flooring within a specific area of interest has become obscured and provide an appropriate output. Upon determining a hazard, the application can serve real-time AI driven, risk assessed and controlled notifications to humans. They in turn can use their critical thinking to deploy timely risk mitigation strategies and address the hazard obstructions identified by this sophisticated, AI driven application

Looking to benefit from the operational efficiencies and improved competitive positioning that our ground-breaking computing vision products can deliver? Why not Contact us now to learn how our technology can help you to differentiate your business through becoming the safest place to work, shop and eat?